CBSE Maths: How AI Handles Board-Style Questions and Handwritten Solutions

CBSE Maths: How AI Handles Board-Style Questions

CBSE maths grading is the process of evaluating student answer sheets against the Central Board of Secondary Education's official marking scheme, which specifies marks for each step of a solution, not just the final answer. AI-powered CBSE maths grading uses optical character recognition and machine learning to read handwritten student papers, follow step-by-step working, assign partial marks according to CBSE criteria, and deliver instant detailed feedback — a process that traditionally takes a teacher 8-12 minutes per paper and now takes seconds.

For the 22 lakh+ students who appear for CBSE Class 10 and Class 12 maths exams each year, and for the crores more who practise with CBSE-pattern papers in coaching centres across India, the accuracy and fairness of maths grading directly affects outcomes. Understanding how AI handles CBSE board-style questions — including the nuances of step marking, partial credit, and alternative methods — is essential for any coaching centre or school considering automated assessment.

The CBSE Maths Marking Scheme Explained

Before understanding how AI grades CBSE maths, you need to understand how CBSE expects maths to be graded. The marking scheme is not a simple right-or-wrong system — it is a structured, step-by-step evaluation framework.

The Step-Mark System

CBSE maths papers are divided into sections by mark value:

Class 10 (Standard Maths, 2026 pattern):

- Section A: 20 multiple-choice questions (1 mark each) = 20 marks

- Section B: 5 very short answer questions (2 marks each) = 10 marks

- Section C: 6 short answer questions (3 marks each) = 18 marks

- Section D: 4 long answer questions (5 marks each) = 20 marks

- Section E: 3 case-based/integrated questions (4 marks each) = 12 marks

- Total: 80 marks

Class 12 (2026 pattern):

- Section A: 18 MCQs + 2 assertion-reason (1 mark each) = 20 marks

- Section B: 5 very short answer questions (2 marks each) = 10 marks

- Section C: 6 short answer questions (3 marks each) = 18 marks

- Section D: 4 long answer questions (5 marks each) = 20 marks

- Section E: 3 case-based questions (4 marks each) = 12 marks

- Total: 80 marks

How Step Marks Work

For a 3-mark question, CBSE does not simply award 3 for correct and 0 for incorrect. The marking scheme specifies intermediate marks. Here is an example:

Question (3 marks): Find the zeroes of the quadratic polynomial x^2 - 3x - 10 and verify the relationship between the zeroes and the coefficients.

CBSE Marking Scheme:

| Step | Work Expected | Marks |

|---|---|---|

| 1 | Factorise: x^2 - 3x - 10 = (x - 5)(x + 2) | 1 |

| 2 | Find zeroes: x = 5, x = -2 | 0.5 |

| 3 | Sum of zeroes = 5 + (-2) = 3 = -b/a = -(-3)/1 = 3 | 0.5 |

| 4 | Product of zeroes = 5 x (-2) = -10 = c/a = -10/1 = -10 | 0.5 |

| 5 | Hence verified | 0.5 |

A student who correctly factorises and finds the zeroes (Steps 1-2) but makes an error in verification (Steps 3-5) receives 1.5 marks out of 3. A student who uses an alternative correct method (quadratic formula instead of factorisation) receives full marks if the working is correct.

The Partial Credit Principle

CBSE's marking philosophy is explicit: marks are awarded for correct steps, even if the final answer is wrong. This principle is documented in the CBSE Marking Scheme Guidelines issued to examiners every year.

Key rules:

- If the student uses a correct method but makes a computational error, marks are awarded for correct steps up to the point of the error.

- If subsequent steps follow logically from the incorrect result (consistent error), marks may be awarded for the method even after the error.

- Alternative correct methods are accepted unless the question specifies a particular approach.

- If the student skips steps but arrives at the correct answer, full marks are awarded (the correct answer implies correct working).

- Diagrams and graphs carry marks when specified in the marking scheme.

Why This Matters for AI Grading

The CBSE marking scheme is precisely the type of structured, rule-based system that AI can handle with high accuracy. The rules are well-defined: each step has a mark allocation, partial credit follows explicit principles, and alternative methods are documented. This is fundamentally different from subjective grading (like English essays) where evaluation criteria are inherently more ambiguous.

For CBSE maths grading specifically, the AI's task is clear: read the student's handwritten working, map each step to the marking scheme, apply partial credit rules, and output a score with step-level feedback.

Challenges of Grading Handwritten CBSE Maths

Manual CBSE maths grading is difficult for several reasons, and understanding these challenges explains why AI offers such significant improvement.

Handwriting Variability

Every student writes differently. Indian students' handwriting varies by:

- Regional writing styles: Students from different states develop different handwriting patterns based on the scripts they learn alongside English.

- Speed vs clarity trade-off: During timed exams, students write quickly. Quick handwriting is often messy — digits blur together, minus signs look like division bars, 6s look like 0s, and 1s look like 7s.

- Mathematical notation: Students represent fractions, square roots, exponents, and integrals in varying ways. Some use standard notation; others improvise.

- Layout variations: Some students work neatly from top to bottom. Others fill margins, squeeze extra working between lines, or use arrows to connect steps that are physically distant on the page.

A CBSE examiner marking 200 papers in a day encounters 200 different handwriting styles. Maintaining consistent interpretation across all of them is cognitively exhausting.

Volume and Time Pressure

CBSE board exam correction is a massive logistical operation:

- 22 lakh+ maths papers must be corrected within a 2-3 week window

- Each examiner is assigned approximately 25-30 papers per day

- Detailed step marking is expected, but time pressure incentivises shortcuts

- Fatigue accumulates — paper 25 does not receive the same careful attention as paper 1

In coaching centres, the problem is different but equally severe. Weekly practice tests generate a continuous stream of papers. A coaching centre in Delhi with 400 students across Class 10 and Class 12 generates 400 maths papers per week. Even with dedicated correctors, the backlog grows.

Inconsistency Across Examiners

CBSE has acknowledged the problem of inter-examiner variability. Studies of re-evaluation requests reveal that:

- Scores can differ by 5-15 marks on a 100-mark paper when corrected by different examiners

- The variance is highest on 3-mark and 5-mark questions where step-marking judgement is required

- Examiners develop personal biases — some are lenient with computational errors, others are strict

- Fatigue and speed introduce random variability even within a single examiner's marking

For coaching centres, this inconsistency means that a student's practice test score depends partly on who corrects the paper — undermining the diagnostic value of the assessment.

Diagnostic Feedback Is Rare

The most valuable outcome of grading is not the score — it is the feedback. Which concept did the student misunderstand? Which computational skill needs practice? Where exactly did the method go wrong?

In practice, CBSE board exam correction provides almost no diagnostic feedback. Students receive a score sheet. Coaching centre correction provides more, but it is limited by time — a teacher spending 10 minutes per paper can mark steps and award scores, but writing detailed comments on 150 papers is not humanly feasible.

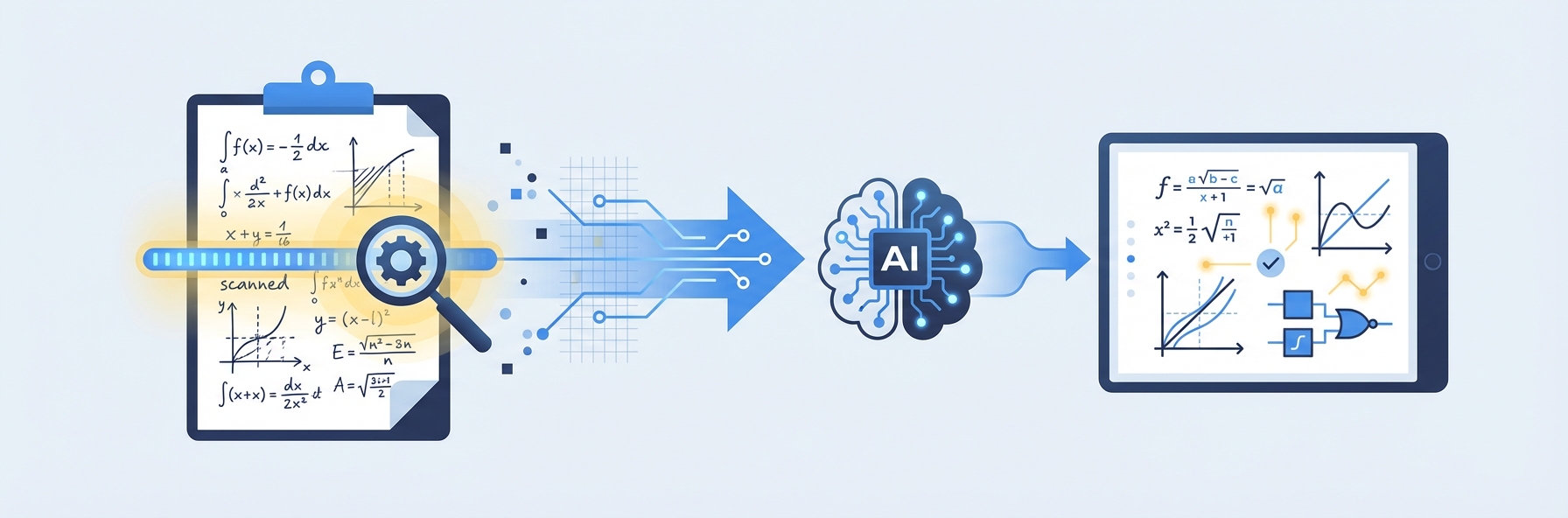

How AI/OCR Reads Handwritten CBSE Maths Solutions

The technology behind AI-powered CBSE maths grading combines several AI systems working in sequence. Here is how the process works, step by step.

Stage 1: Image Capture and Pre-Processing

The student completes their maths test on paper, exactly as they would in a board exam. The completed answer sheet is photographed using a smartphone camera. The AI system pre-processes the image:

- Deskewing: Corrects for tilted or rotated photographs

- Contrast enhancement: Improves the visibility of handwriting against the paper background

- Noise reduction: Removes shadows, smudges, and background patterns

- Region detection: Identifies the boundaries of each question's working, separating question 1's solution from question 2's

This pre-processing stage is critical for Indian conditions, where students use varied paper quality, photography happens in different lighting conditions, and smartphone cameras range from basic to advanced.

Stage 2: Handwriting Recognition (OCR)

The specialised OCR engine reads the handwritten content within each detected region:

- Character recognition: Identifies individual digits (0-9), letters (a-z, x, y, n), and mathematical symbols (+, -, x, /, =, <, >, sqrt, etc.)

- Spatial parsing: Understands two-dimensional mathematical layout — a number above and to the right is an exponent, a horizontal line between expressions is a fraction, text under a radical sign is under a square root

- Expression construction: Assembles recognised characters and spatial relationships into complete mathematical expressions (e.g., "x^2 - 3x - 10 = 0")

- Step segmentation: Identifies each line or step of the student's working as a distinct unit of evaluation

Modern OCR trained specifically on handwritten maths achieves 95%+ character-level accuracy. For CBSE maths grading, the system is further optimised for the specific notation patterns that Indian students use.

Stage 3: Mathematical Evaluation

Once the AI has converted handwritten working into machine-readable mathematical expressions, it evaluates each step:

- Step 1 evaluation: Does the student's first line of working correctly transform the problem? For a factorisation problem, did they correctly identify the factors?

- Step-to-step logic: Does each subsequent step follow logically from the previous one? Even if step 1 contains an error, are steps 2 and 3 logically consistent with the incorrect result from step 1?

- Final answer check: Does the student's final answer match the expected answer?

- Alternative method detection: Has the student used a valid but different approach from the one in the marking scheme? (e.g., quadratic formula instead of factorisation)

Stage 4: Mark Assignment per CBSE Rules

The AI applies CBSE marking scheme rules to assign marks:

- Full marks for each correct step per the marking scheme allocation

- Partial marks for correct method with computational errors

- Consistent error handling: marks awarded for correct logical steps following an initial error

- Full marks for correct final answer even if intermediate steps are not shown

- Alternative method recognition with equivalent mark allocation

Stage 5: Feedback Generation

This is where AI CBSE maths grading surpasses manual correction. For every graded question, the system generates:

- Step-level marks: Exactly how many marks were awarded at each step, and why

- Error identification: The specific step where the error occurred, what the error was, and what the correct approach should have been

- Concept tagging: Which mathematical concept the question tests and whether the student has demonstrated mastery

- Revision recommendation: Based on the error pattern, which chapter or topic the student should review

A manual corrector doing the same for 150 papers would take days. The AI delivers it in seconds.

Accuracy Across Different CBSE Question Types

AI CBSE maths grading does not perform equally across all question types. Understanding where the AI excels and where it faces challenges helps coaching centres set appropriate expectations.

1-Mark MCQs: Near-Perfect Accuracy

Multiple-choice questions are the simplest for AI to grade. The student selects an option; the AI checks it against the answer key. Accuracy is effectively 100%.

For CBSE maths, the MCQ section (Section A) includes 20 questions covering a range of topics. The AI handles these instantly and without error, provided the student marks their answers clearly.

2-Mark Short Answer Questions: 97%+ Accuracy

Two-mark questions typically require 2-3 steps of working. Examples:

- "Find the discriminant of 2x^2 - 4x + 3 = 0"

- "Find the nth term of the AP: 7, 13, 19, 25, ..."

These questions have limited solution length, clear step sequences, and well-defined marking criteria. The AI handles them with very high accuracy (97%+), including correct partial mark assignment when the student's method is correct but the computation has an error.

3-Mark Short Answer Questions: 95%+ Accuracy

Three-mark questions involve more extended working:

- Solving quadratic equations by factorisation or formula

- Finding zeroes of polynomials and verifying relationships

- Problems involving arithmetic progressions

- Basic trigonometric proofs

The AI reads multi-line handwritten working, evaluates each step, and assigns marks according to the marking scheme. Accuracy remains high (95%+) because the solution structures are well-defined and the marking criteria are explicit.

5-Mark Long Answer Questions: 92%+ Accuracy

Five-mark questions are the most complex:

- Multi-step word problems (speed-distance-time, mixture problems, boat/stream)

- Construction problems with geometric proofs

- Statistics questions (mean, median, mode from grouped data)

- Coordinate geometry proofs

These questions involve longer working, more handwriting to parse, and greater variety in solution approaches. The AI achieves 92%+ accuracy, with the remaining cases typically involving:

- Unusual handwriting layout that confuses the spatial parser

- Alternative methods not in the primary marking scheme (the AI flags these for human review)

- Diagram-dependent questions where geometric constructions need visual evaluation

Case-Based Questions (4 marks): 93%+ Accuracy

CBSE's case-based questions present a real-world scenario followed by sub-questions. For example, a question might describe a park's dimensions and ask students to calculate area, perimeter, or solve a related equation.

The AI handles these well because the sub-questions are typically standard mathematical calculations with clear marking criteria. The scenario text provides context but the grading is based on the mathematical working.

Where AI Flags for Human Review

Approximately 5-8% of answers are flagged for human review rather than automatically graded. Common reasons:

- Unclear handwriting: The OCR cannot confidently read a digit or symbol

- Unusual layout: The student's working does not follow a standard top-to-bottom flow

- Novel method: The student used an approach not in the marking scheme (may be correct but needs human verification)

- Diagram-heavy questions: Geometric constructions and graph-plotting questions that require visual assessment

Coaching centre faculty review only these flagged items — typically 5-10 answers per batch of 50 papers — rather than correcting every paper manually.

Comparison: AI Grading vs Manual CBSE Maths Grading

| Factor | Manual Grading | AI Grading (IntelGrader) |

|---|---|---|

| Time per paper | 8-12 minutes | Under 30 seconds |

| Turnaround (50 papers) | 7-10 hours | Under 30 minutes |

| Consistency | Varies by examiner and fatigue | Identical rubric applied every time |

| Partial marks accuracy | Good (experienced examiner) | 95%+ with CBSE scheme configured |

| Step-level feedback | Rare (time-prohibitive) | Every question, every paper |

| Student receives results | 3-7 days | Same day |

| Cost per paper | ₹10-25 (corrector time) | Significantly lower at scale |

| Scalability | Linear (more papers = more time) | Near-instant (500 papers ≈ same time as 50) |

| Common errors identified | Faculty intuition | Data-driven, across entire batch |

| Works at 2 AM | No | Yes |

Where Manual Grading Still Has an Edge

To be fair, experienced CBSE maths examiners bring advantages that current AI does not fully replicate:

- Interpretation of ambiguous handwriting by using context clues that the AI cannot always access

- Recognition of creative solution approaches that are mathematically valid but unconventional

- Holistic assessment of student capability based on the "feel" of the paper — something that experienced examiners develop over years

- Handling of diagram and construction questions where spatial evaluation of physical drawings is required

The ideal system — and what IntelGrader enables — is AI handling the 90-95% of grading that is straightforward, while human expertise is applied to the 5-10% that genuinely requires judgement.

Preparing Students with AI-Powered CBSE Maths Feedback

The ultimate goal of CBSE maths grading is not the score — it is the learning that results from the assessment. AI-powered grading transforms the feedback loop in ways that manual correction cannot match.

Instant Error Analysis

When a student submits a practice test and receives AI-graded results within minutes, the test experience is still fresh. They can immediately see:

- Which questions they got wrong

- Exactly which step contained the error

- What the correct working should have been

- Which concept they need to revise

Compare this with receiving results 5-7 days later, when the student has moved on to new chapters and the test questions are half-forgotten. The learning value of delayed feedback is a fraction of instant feedback.

Concept-Level Weakness Mapping

Over multiple practice tests, AI grading builds a detailed map of each student's strengths and weaknesses at the concept level:

- "Student A consistently makes errors in trigonometric identities (Chapter 8) — scoring 45% on identity-based questions but 85% on application questions."

- "Student B's factorisation is strong but she loses marks on verification steps — a procedural gap, not a conceptual one."

- "Student C's arithmetic accuracy is 72% — below the batch average of 88%. Computational practice needed."

This level of diagnostic detail enables targeted revision. Instead of telling a student to "revise maths," the teacher can say: "Focus on trigonometric identities and do 20 extra practice problems this week." That specificity makes revision more efficient and more motivating.

Batch-Level Insights for Teachers

CBSE maths grading data, aggregated across an entire batch, reveals patterns that inform teaching:

- If 60% of the batch gets a question wrong, the problem is likely with the teaching (or the question), not the students. The teacher knows to revisit that topic.

- If errors cluster in computation rather than method, the batch needs arithmetic drilling, not conceptual re-teaching.

- If Class 10 students consistently score low on case-based questions, the centre needs to dedicate more practice time to the new question format that CBSE has introduced.

These insights are available instantly after each test — not after a teacher manually tallies scores in a spreadsheet, which rarely happens due to time constraints.

Board Exam Readiness Tracking

For Class 10 and Class 12 students preparing for CBSE board exams, AI grading enables a structured readiness tracking system:

- Monthly readiness score based on practice test performance across all chapters

- Chapter completion status showing which chapters have been tested and at what mastery level

- Predicted board exam range based on practice test trends (motivating for students, informative for parents)

- Targeted revision plan generated automatically based on identified gaps

Parents — who are investing ₹2-5 lakh per year in coaching — receive structured, data-backed reports via WhatsApp instead of vague verbal updates. This transparency builds trust, reduces parent anxiety, and differentiates the coaching centre from competitors who cannot provide this level of insight.

Preparing for the New CBSE Pattern

CBSE has been evolving its exam pattern, with increasing emphasis on:

- Competency-based questions that test application, not just recall

- Case-based questions that embed mathematical problems in real-world contexts

- Internal choice that allows students to choose between questions

AI grading platforms can be configured to align with these new patterns, ensuring that practice tests mirror the actual board exam format. As CBSE updates its question design, the marking scheme in the AI system is updated accordingly — something that is easier and faster than retraining human examiners.

Implementation for Coaching Centres

Coaching centres preparing students for CBSE maths board exams can implement AI-powered CBSE maths grading in a straightforward process:

- Upload your CBSE-pattern question papers — use your existing test series, sample papers, or past board exam papers.

- Configure the marking scheme — define step marks for each question, matching CBSE guidelines.

- Students write and submit — papers are completed on standard answer sheets and photographed with a smartphone.

- AI grades instantly — results, step-level marks, and feedback are available within minutes.

- Faculty reviews flagged items — only 5-10% of answers need human attention.

- Share progress reports — send structured performance updates to parents via WhatsApp.

The process works with any CBSE maths content: NCERT textbook exercises, sample papers, previous year papers, and custom test series.

See how IntelGrader handles CBSE maths papers | Book a free demo

Related reading:

- AI-Powered Tuition Management for Coaching Centres

- Best Tutoring Management Software in 2026

- How to Automate Grading in Coaching Centres

- IntelGrader vs Teachmint

FAQ

Can AI accurately grade CBSE maths step-by-step working?

Yes. AI grading platforms like IntelGrader are specifically designed to evaluate handwritten step-by-step maths working according to CBSE marking scheme rules. The AI reads each line of the student's solution, evaluates whether the step is mathematically correct, assigns the appropriate marks per the scheme, and handles partial credit for correct method with computational errors. Accuracy on standard CBSE question types (algebraic, arithmetic, coordinate geometry) exceeds 95%. The system is configured with CBSE-specific marking rules, including the principle of awarding marks for consistent error carry-forward and accepting alternative correct methods.

How does AI handle alternative methods that are not in the marking scheme?

CBSE's marking scheme typically includes one or two standard solution methods, but students sometimes use valid alternative approaches. AI grading systems handle this in two ways. First, the most common alternative methods are pre-loaded alongside the primary marking scheme, so the AI recognises them automatically. Second, when a student uses a genuinely novel approach that the AI has not seen before, it flags the answer for human review rather than marking it incorrect. The faculty member then evaluates the flagged answer — typically a handful per batch — ensuring that no student is penalised for creative problem-solving. Over time, confirmed alternative methods are added to the system, reducing the flagging rate.

Is AI CBSE maths grading reliable enough for internal school assessments?

AI grading is well-suited for internal assessments, practice tests, and coaching centre evaluations. With 95%+ accuracy on CBSE-pattern questions and the ability to flag uncertain cases for human review, the system produces results that are at least as reliable as manual correction — and significantly more consistent. The key advantage for internal assessments is speed and feedback quality: students receive results the same day, with detailed step-level feedback that is not feasible with manual correction. For formal board examinations, CBSE currently uses human examiners, but AI tools are valuable for the extensive practice and mock testing that precedes the board exam.

What types of CBSE maths questions does AI handle best?

AI grading performs best on questions with well-defined solution structures: algebraic manipulation (factorisation, solving equations, simplification), arithmetic and number theory, coordinate geometry (distance formula, section formula, area calculations), statistics (mean, median, mode from grouped data), and probability. These question types have clear step sequences, explicit marking criteria, and limited ambiguity in correct answers. The AI handles 1-mark MCQs with near-perfect accuracy and 2-5 mark questions with 92-97% accuracy depending on complexity. Questions involving geometric constructions, hand-drawn graphs, and spatial diagrams are the most challenging, as they require visual evaluation beyond text-based OCR.

How does AI-graded feedback compare with a teacher's feedback on CBSE maths papers?

AI-generated feedback is more detailed and more consistent than what most teachers can provide manually. On a typical 80-mark CBSE maths paper with 38 questions, the AI provides step-level marks, specific error identification, and concept-level tagging for every single question. A teacher correcting the same paper manually would spend 8-12 minutes and typically provide only ticks, crosses, and a total score — detailed written feedback on every question for every student is simply not feasible at scale. Where teachers have an edge is in qualitative judgement: recognising when a student's error suggests a deeper conceptual misunderstanding versus a simple computational slip, and adjusting their teaching accordingly. The ideal workflow combines AI grading (for speed, consistency, and detailed feedback) with teacher expertise (for interpreting patterns and adapting instruction).

Sources

- Central Board of Secondary Education (CBSE). Marking Scheme and Sample Question Papers for Class X and XII Mathematics, 2025-26. Official marking criteria and step-mark allocations. https://cbse.gov.in

- National Council of Educational Research and Training (NCERT). Mathematics Textbooks for Class 10 and Class 12. Standard curriculum content aligned with CBSE examination patterns. https://ncert.nic.in

- CBSE. Examination By-Laws and Evaluation Guidelines. Rules governing the evaluation process for CBSE board examinations, including partial credit principles and examiner training protocols.

- Ministry of Education, Government of India. National Education Policy 2020. Policy recommendations for competency-based assessment and technology integration in education. https://www.education.gov.in/sites/upload_files/mhrd/files/NEP_Final_English.pdf

- International Journal on Document Analysis and Recognition (IJDAR). Recent Advances in Handwritten Mathematical Expression Recognition. Survey of OCR and AI technologies for reading handwritten mathematical notation.

Ready to transform your grading?

See how IntelGrader can save your tutoring centre 10+ hours per week with AI-powered grading.