Smart Grading vs Traditional Marking: A Complete Comparison for UK Tutoring Centres

Smart Grading vs Traditional Marking: A Complete Comparison for UK Tutoring Centres

Smart grading vs traditional marking is the central question facing every UK tutoring centre owner who wants to scale without burning out their staff. Smart grading uses artificial intelligence to read, assess, and score student work automatically — delivering results in seconds rather than hours. Traditional marking is the familiar process of a human tutor working through a pile of worksheets with a red pen, a mark scheme, and a dwindling supply of patience. Both approaches have their place, but understanding where each excels — and where each falls short — is essential for making the right operational decision for your centre.

This guide breaks down the two approaches side by side, examines five key differences that matter most to tutoring centre operators, analyses the real costs involved, and helps you decide when to stick with traditional marking and when to make the switch to smart grading.

What Is Smart Grading?

Smart grading is the use of artificial intelligence to mark student work automatically. The technology reads student answers — including handwritten responses on paper worksheets — compares them against correct solutions, and returns scored results with targeted feedback. Modern smart grading platforms like IntelGrader use optical character recognition (OCR) trained specifically on student handwriting, combined with machine learning models that understand mathematical notation, spatial relationships between symbols, and multi-step working.

The process is straightforward. A tutor uploads a worksheet and answer key once. Students complete the work on paper, exactly as they would in an exam. The completed worksheet is photographed or scanned and submitted to the platform. Within seconds, the AI returns a fully graded paper with question-by-question feedback and an overall score. Every result is logged automatically, feeding into progress tracking dashboards.

For a comprehensive introduction to the technology, see our complete guide on what is smart grading.

What Is Traditional Marking?

Traditional marking is the process most educators know intimately. A tutor sits down with a stack of completed worksheets, a mark scheme, and a pen. They work through each paper one at a time — reading the student's answers, comparing them against the expected responses, awarding marks, writing corrections or comments, and tallying the score. The marked papers are then returned to students, typically at the next session.

Traditional marking has been the default approach to assessment for centuries. It requires no technology beyond the mark scheme itself, and it allows the marker to exercise professional judgement — interpreting ambiguous answers, recognising creative approaches, and providing encouragement tailored to each student's personality and confidence level.

The challenge is not quality but scale. When a tutor is marking 10 papers, traditional marking works well. When they are marking 50, 100, or 200 papers per week — as many UK tutoring centre staff are — the approach becomes a bottleneck that constrains every other part of the operation.

Side-by-Side Comparison: Smart Grading vs Traditional Marking

Before examining the differences in detail, here is a direct comparison across the dimensions that matter most to UK tutoring centre operators making the decision between smart grading vs traditional marking.

| Dimension | Traditional Marking | Smart Grading |

|---|---|---|

| Time per worksheet | 3-5 minutes | Under 30 seconds |

| Feedback turnaround | Hours to days (often returned at the next session) | Instant — results within seconds of submission |

| Consistency | Varies by marker, fatigue, time of day, and workload | Identical criteria applied every time, regardless of volume |

| Feedback depth | Depends on individual tutor's effort and available time | Structured, specific, and actionable on every paper |

| Progress tracking | Manual spreadsheet entry, if done at all | Automatic, with dashboards, trend analysis, and parent-ready reports |

| Scalability | Linear — more papers means proportionally more hours | Near-zero marginal effort per additional paper |

| Cost per paper | Tutor time at market rates (typically £12-25/hour) | Fraction of the manual cost as volume increases |

| Bias and fairness | Subject to unconscious marker preferences and fatigue effects | Objective, criteria-based assessment every time |

| Handwriting interpretation | Human nuance; can interpret ambiguous writing | AI-powered OCR; flags low-confidence readings for human review |

| Personalisation of comments | Highly personalised when tutor has time | Structured feedback based on error analysis; less personal tone |

| Setup effort | None — tutors already know how to mark | Initial worksheet and answer key upload; minimal ongoing effort |

| Technology required | Pen, mark scheme, patience | Smartphone or scanner, internet connection, smart grading platform |

The table tells a clear story for high-volume centres: smart grading is faster, more consistent, and more scalable. But the picture is more nuanced than a simple table can capture. Let us examine the five differences that matter most.

Five Key Differences That Matter

1. Speed and throughput

The most immediately obvious difference between smart grading vs traditional marking is speed. A worksheet that takes a human tutor 3-5 minutes to mark is processed by an AI grading system in under 30 seconds — and that includes reading the handwriting, evaluating every answer, generating feedback, and logging the results.

To put this in practical terms for a UK tutoring centre: if your centre processes 200 maths worksheets per week (a typical volume for a centre with 100-150 active students attending twice weekly), traditional marking consumes 10 to 17 hours of tutor time. With smart grading, the same 200 worksheets are processed in under two hours of total handling time — most of which is the physical act of photographing the papers, not waiting for the AI.

That is 8-15 hours per week returned to your tutors. Over a year, that represents 400-750 hours — the equivalent of hiring an additional part-time staff member, except the cost is a fraction of a salary.

2. Consistency and fairness

Human markers are inherently variable. The same tutor marks differently at 4 PM versus 10 PM. Different tutors interpret partial credit rules differently. Research from the Education Endowment Foundation (EEF) demonstrates that the quality and consistency of feedback is one of the most powerful levers for improving pupil attainment, with high-quality feedback adding up to eight months of additional progress per year.

Traditional marking introduces variability at every level. Inter-marker variability means two tutors grading the same paper might arrive at different scores. Intra-marker variability means the same tutor grades differently depending on fatigue, mood, and how many papers they have already marked that evening. There is also evidence of unconscious bias — neat handwriting receives more favourable treatment than messy-but-correct working, and papers marked earlier in a batch tend to receive more detailed feedback than those at the bottom of the pile.

Smart grading eliminates all of these sources of inconsistency. Every worksheet is assessed against the same criteria, applied in the same way, regardless of when it was submitted or how many papers came before it. A student's score reflects their understanding of the material — not who marked their paper, or when.

3. Feedback quality and turnaround

The value of feedback is directly related to its timeliness. John Hattie's synthesis of over 1,800 meta-analyses in Visible Learning ranks feedback among the top influences on student achievement — but only when it is timely and specific. A correction received 30 seconds after completing a worksheet, while the student still remembers their thought process, is vastly more effective than the same correction returned three days later at the next tutoring session.

Traditional marking almost always introduces a delay. Even the most diligent tutor cannot mark papers instantly during a busy session. Worksheets go into a pile, get marked overnight or over the weekend, and come back at the next appointment. By then, the student has moved on. The feedback becomes an historical record rather than a learning intervention.

Smart grading closes this gap entirely. Results are available within seconds. A student can review their mistakes immediately, ask the tutor a question about a specific error while it is fresh, and attempt a similar problem with the correction in mind. This tight feedback loop accelerates learning in a way that delayed marking simply cannot match.

4. Progress tracking and data

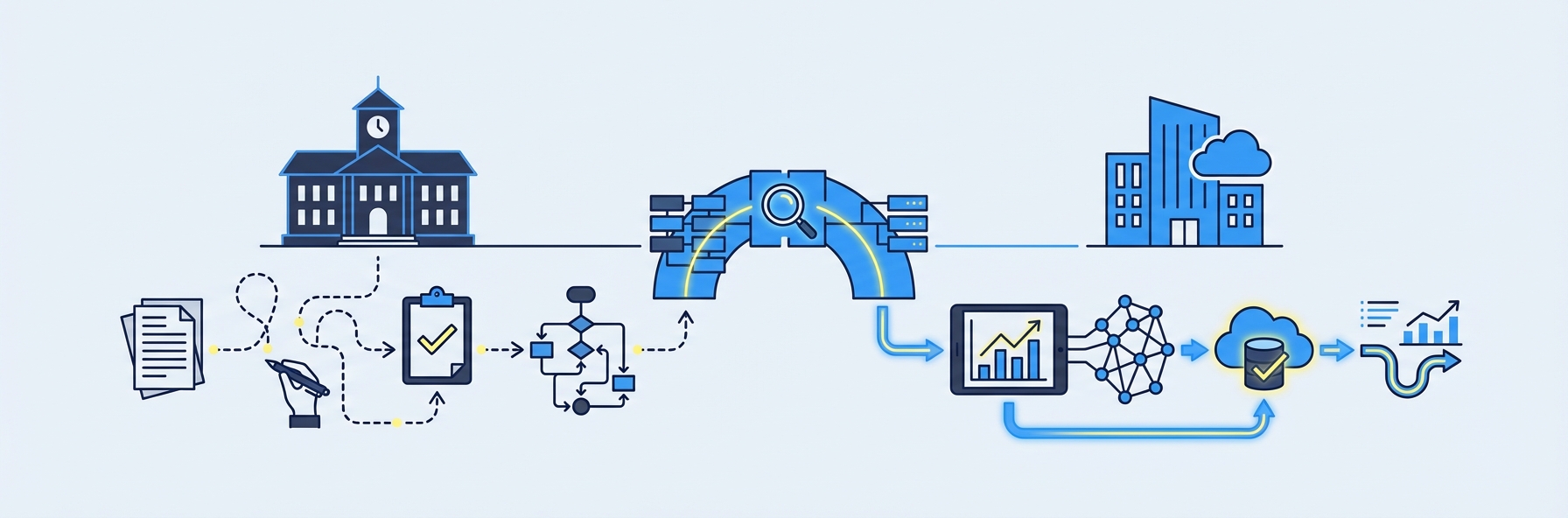

One of the least discussed but most significant differences between smart grading vs traditional marking is what happens to the data after the worksheet is graded.

In a traditional marking workflow, the data — the scores, the specific errors, the patterns of misconception — lives on the paper and in the tutor's memory. If the centre tracks progress at all, it is typically through manual spreadsheet entry: a tutor typing scores into a Google Sheet at the end of the week. This process is error-prone, time-consuming, and produces flat data that is difficult to analyse.

Smart grading generates structured data automatically. Every graded worksheet becomes a data point in a longitudinal record of the student's performance. Over weeks and months, patterns emerge: which topics a student consistently struggles with, which error types recur, whether performance is trending up or down. This data powers the kind of evidence-based, personalised teaching that OFSTED's framework rewards and the Department for Education's guidance encourages.

For parent communication, the difference is equally stark. Instead of a vague verbal update at parents' evening — "Jamie is doing well in algebra but needs to work on fractions" — the tutor can share a dashboard showing Jamie's scores across 12 weeks, with topic-level breakdowns that make the progress (or lack of it) unmistakably clear. That transparency builds trust, drives retention, and generates referrals.

5. Scalability and operational economics

This is the difference that matters most for centre owners thinking about growth. In a traditional marking model, the economics are brutally linear: more students means more worksheets, which means more marking hours, which means either higher wage costs or longer unpaid hours for existing staff. Growth and marking burden scale in lockstep.

Smart grading breaks that relationship. The marginal cost of grading one additional worksheet with AI is effectively zero. A centre can grow from 80 students to 200 students without proportionally increasing its marking workload. The tutors who would have spent their evenings marking are instead available for lesson planning, student support, or — critically — teaching additional sessions that generate revenue.

For a growing UK tutoring centre, this is not a marginal efficiency improvement. It is a fundamental change in the operational model — from one where growth creates overhead to one where growth is absorbed by existing infrastructure.

Cost Analysis: The Real Numbers

Understanding the financial case requires looking beyond the subscription cost of a smart grading platform and comparing total cost of ownership for each approach.

Traditional marking costs

Consider a typical mid-sized UK tutoring centre:

- Weekly worksheet volume: 250 papers

- Average marking time: 4 minutes per paper

- Total weekly marking time: 16.7 hours

- Tutor hourly rate (marking): £14-20/hour (depending on whether marking is paid at the full tutoring rate or a reduced administrative rate)

- Weekly marking cost: £234-£334

- Annual marking cost (48 working weeks): £11,232-£16,032

These figures assume that all marking is paid. In reality, many UK tutoring centres expect tutors to mark during unpaid "prep time," which reduces the explicit cash cost but increases the hidden cost in staff dissatisfaction, burnout, and turnover. Replacing a tutor who leaves due to unsustainable workload costs an estimated £1,500-3,000 in recruitment, training, and lost productivity.

Smart grading costs

Smart grading platform costs vary by provider and volume. IntelGrader offers flexible pricing tailored to each centre's needs — to get a specific quote, book a demo. What we can say is that the platform cost for a centre processing 250 worksheets per week is typically a small fraction of the annual manual marking cost calculated above.

Even a conservative estimate suggests the return on investment is substantial:

| Cost Component | Traditional Marking | Smart Grading |

|---|---|---|

| Annual marking labour | £11,232-£16,032 | Minimal (photo/scan handling only) |

| Platform subscription | £0 | Platform-dependent |

| Staff turnover cost | £1,500-3,000 per departure | Reduced — lower burnout |

| Progress tracking overhead | 2-4 hours/week (manual entry) | Included automatically |

| Parent reporting | 1-2 hours/week (manual reports) | Included automatically |

The financial case is strongest for centres processing 150+ worksheets per week, where the annual saving in marking labour alone significantly exceeds the cost of any smart grading platform on the market.

Case Study Scenario: Greenfield Maths Centre

To illustrate the practical impact, consider a realistic scenario based on common UK tutoring centre operations.

Greenfield Maths Centre is an independent tutoring centre in Birmingham serving 130 students across KS2, KS3, GCSE, and A-level maths. Students attend twice per week, completing one worksheet per session. The centre employs four part-time tutors and one centre manager.

Before smart grading

- Weekly worksheets: 260

- Marking time: 4 minutes per paper = 17.3 hours per week

- Marking distribution: Split across four tutors, each marking approximately 4.3 hours per week

- Marking payment: £15/hour (below tutoring rate), costing the centre £260/week

- Feedback turnaround: 2-4 days (papers returned at the next session)

- Progress tracking: Centre manager enters scores into a Google Sheet every Friday (2 hours/week)

- Parent reporting: Verbal updates at half-termly parents' evenings; no written reports between events

- Staff satisfaction: Two tutors have raised concerns about the marking workload; one is considering leaving

After smart grading

- Weekly worksheets: 260 (unchanged — student experience is identical)

- Marking time: 10-15 minutes per batch of photographing completed papers (total: approximately 2 hours/week for scanning and quality review)

- Feedback turnaround: Instant — students receive results before leaving the session

- Progress tracking: Automatic; dashboard updated in real time

- Parent reporting: Parents access the dashboard directly; monthly summary emails generated automatically

- Staff satisfaction: Tutors report spending reclaimed time on lesson planning and one-to-one support; no further resignations

The difference

- Hours saved per week: 15+

- Annual labour saving: Approximately £12,000

- Tutor retention: Improved — reduced burnout, more sustainable workload

- Student outcomes: Faster feedback cycle accelerates learning; data-driven intervention identifies struggling students earlier

- Parent satisfaction: Measurable progress reports increase trust and reduce churn

This scenario is not hypothetical in its details. It reflects the operational reality of hundreds of UK tutoring centres operating today. The specific numbers will vary by centre, but the directional impact is consistent.

Learn more about how smart grading works for UK tutoring centres.

When to Stick with Traditional Marking

Smart grading is not the right answer in every situation. There are contexts where traditional marking retains clear advantages.

Subjective or creative assessments

When the work being assessed requires subjective judgement — creative writing, extended essay responses, open-ended problem solving where multiple valid approaches exist — a human marker brings interpretive nuance that AI cannot fully replicate. Smart grading excels at structured, objective assessment (maths problems with definable correct answers); it is less suited to marking an English literature essay on the themes of Macbeth.

Very low volume

If your centre processes fewer than 30 worksheets per week, the time savings from smart grading may not justify the setup effort and platform cost. At low volumes, a skilled tutor can mark papers quickly enough that automation offers marginal rather than transformational benefit.

Highly complex or non-standard question formats

Questions involving diagrams, graph interpretation, or unusual formats that fall outside the AI's training data may require human review. The best smart grading systems flag these for manual attention, but if the majority of your worksheets involve such formats, the automation rate may be too low to deliver meaningful value.

Centres prioritising the personal touch above all else

Some centres position themselves on the deeply personal relationship between tutor and student, and view hand-marking with personalised comments as part of the service they sell. If your brand promise centres on the tutor-student relationship at every touchpoint — including the marking — then maintaining traditional marking may align better with your market positioning, even at higher cost.

When to Switch to Smart Grading

For most UK tutoring centres, the question is not whether to adopt smart grading but when. Here are the signals that the switch will deliver meaningful value.

Your marking backlog is growing

If worksheets are piling up and feedback is consistently delayed beyond the next session, smart grading addresses the bottleneck directly.

Your tutors are burning out

If marking workload is a factor in staff dissatisfaction or turnover, automating the most tedious part of the role makes the job more sustainable and more attractive to candidates.

You want to grow without adding marking headcount

If your business plan involves increasing student numbers, smart grading means growth does not require proportional increases in marking labour.

Parents are asking for progress data

If parents want more than verbal reassurances — if they want scores, trends, and evidence of improvement — smart grading generates this data automatically without additional administrative effort.

You teach maths at scale

If maths is your primary subject and you process 100+ worksheets per week, you are sitting in the exact use case where smart grading delivers the strongest return. Platforms like IntelGrader are purpose-built for this workflow. Explore the full range of tutoring software solutions to see how smart grading fits into your centre's technology stack.

You want exam-aligned practice with rapid feedback

For centres preparing students for GCSEs, A-levels, or KS2 SATs, smart grading enables high-volume practice under exam-like conditions (handwritten, on paper) with immediate, criteria-aligned feedback. That tight practice-feedback cycle is exactly what research shows accelerates exam readiness.

The Hybrid Approach: Getting the Best of Both

The most effective centres do not choose exclusively between smart grading vs traditional marking. They adopt a hybrid model that uses each approach where it is strongest.

Use smart grading for:

- High-volume maths worksheets (arithmetic, algebra, equations, calculus)

- Daily or weekly practice papers where speed and consistency matter

- Exam preparation worksheets aligned to specific mark schemes

- Any workload where you need automatic progress tracking

Use traditional marking for:

- Extended written responses (English essays, creative writing)

- Complex multi-mark questions requiring subjective judgement

- Situations where a personalised, encouraging comment from the tutor carries significant pastoral value

- Pilot or diagnostic assessments where the tutor wants to hand-review every response

This hybrid model gives centres the efficiency of AI where it matters most while preserving the human judgement that adds genuine value in specific contexts. It is not all-or-nothing — and treating it as such would miss the point of both approaches.

Ready to see how smart grading could work alongside your current marking process? Book a free demo of IntelGrader and test it with your own worksheets.

Frequently Asked Questions

Is smart grading accurate enough to replace human marking for maths?

Yes, for the structured maths questions that make up the bulk of tutoring centre worksheets — arithmetic, algebra, equation solving, and standard curriculum content — smart grading accuracy is comparable to that of experienced human markers. The AI evaluates handwritten working step by step, awards partial credit where appropriate, and flags any responses where it has low confidence for human review. In practice, the agreement rate between AI and human graders meets or exceeds the inter-marker reliability typically observed between two different human markers assessing the same paper. The system does not guess on ambiguous cases; it escalates them. For UK tutoring centres, this means you get reliable, consistent grading on the vast majority of papers, with a human safety net for edge cases. For more on how this technology works, see our guide to what is smart grading.

Will my tutors lose their jobs if I adopt smart grading?

No. Smart grading replaces the most repetitive part of a tutor's job — the marking pile — not the tutor themselves. The skills that make a tutor effective — explaining concepts, building student confidence, adapting lessons in real time, motivating struggling learners — are irreplaceable by technology. What changes is how tutors spend their time. Instead of marking worksheets at midnight, they can invest those hours in lesson planning, one-to-one support, and the kind of high-value teaching that actually moves student outcomes. Most centres that adopt smart grading find that tutor job satisfaction improves because the role becomes more focused on teaching and less on administrative drudgery.

How does smart grading handle the UK maths curriculum specifically?

Smart grading platforms like IntelGrader support maths across all UK key stages, from KS2 arithmetic through to A-level calculus. The AI is trained on the types of questions, notation, and working-out methods used in preparation for SATs, GCSE, and A-level examinations. Answer keys can be configured to follow specific exam board mark schemes (AQA, Edexcel, OCR), including rules for method marks, alternative valid approaches, and common misconceptions. The system does not require proprietary worksheets — you can upload the resources you already use, from textbook exercises to past paper questions, and the AI will grade against whatever marking criteria you specify.

What happens if the AI misreads a student's handwriting?

When the AI encounters handwriting it cannot interpret with sufficient confidence, it flags that specific question for human review rather than guessing. The tutor can then check the flagged response manually and confirm or correct the AI's assessment. This happens in a small percentage of cases — typically where handwriting is genuinely illegible or where unusual notation falls outside the AI's training data. The flagging mechanism means you never have to worry about silent errors. You get the speed of AI grading on the clear majority of responses, with human oversight exactly where it is needed. Recognition accuracy continues to improve with each model update, and the system performs well even on the rushed, imperfect handwriting that real students produce under timed conditions.

Can I use smart grading for subjects other than maths?

Currently, the strongest smart grading platforms — including IntelGrader — specialise in mathematics because maths generates the highest marking volume and presents the most technically demanding handwriting recognition challenge. For other subjects, traditional marking or general-purpose AI tools may be more appropriate today. However, the technology is advancing rapidly. Subject coverage is expanding, with English and science in active development on several platforms. For most UK tutoring centres, maths marking is the dominant pain point, so addressing it with smart grading delivers the greatest return even before other subjects are supported.

Sources

Education Endowment Foundation (2023). Teaching and Learning Toolkit: Feedback. Available at: https://educationendowmentfoundation.org.uk/education-evidence/teaching-learning-toolkit/feedback

Hattie, J. (2009). Visible Learning: A Synthesis of Over 800 Meta-Analyses Relating to Achievement. Routledge.

The Sutton Trust (2019). Private Tuition Polling 2019. Available at: https://www.suttontrust.com/our-research/private-tuition-polling-2019/

Department for Education (2022). School Workforce in England 2022. Available at: https://explore-education-statistics.service.gov.uk/find-statistics/school-workforce-in-england

OFSTED (2024). Education Inspection Framework. Available at: https://www.gov.uk/government/publications/education-inspection-framework

Ready to transform your grading?

See how IntelGrader can save your tutoring centre 10+ hours per week with AI-powered grading.